Thinking with Video: Video Generation as a Promising Multimodal Reasoning Paradigm

TL;DR

We introduce "Thinking with Video", a new paradigm leveraging video generation for multimodal reasoning. Our VideoThinkBench shows that Sora 2 surpasses GPT-5 by 10% on eyeballing puzzles and reaches 69% accuracy on MMMU.

Abstract

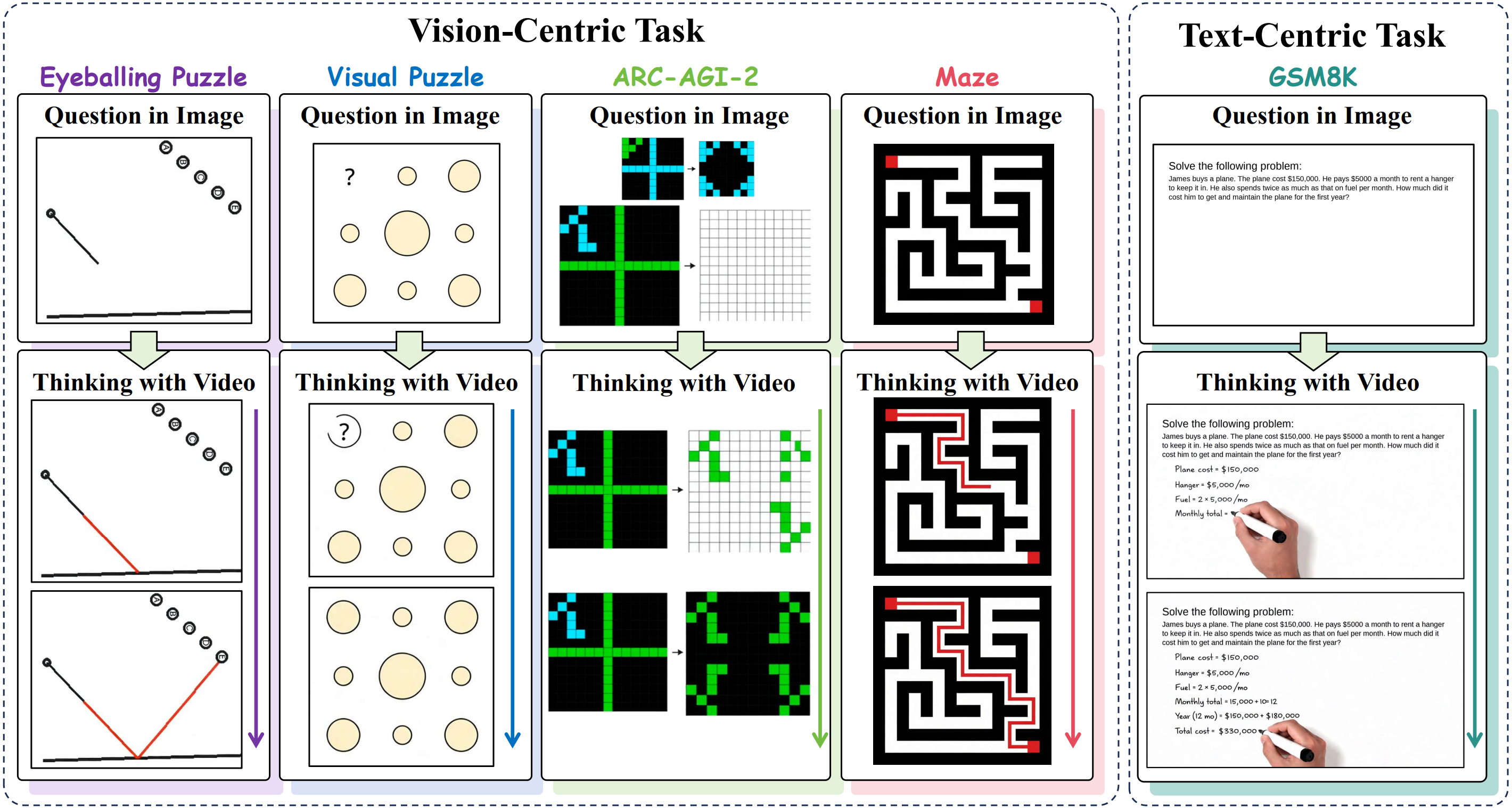

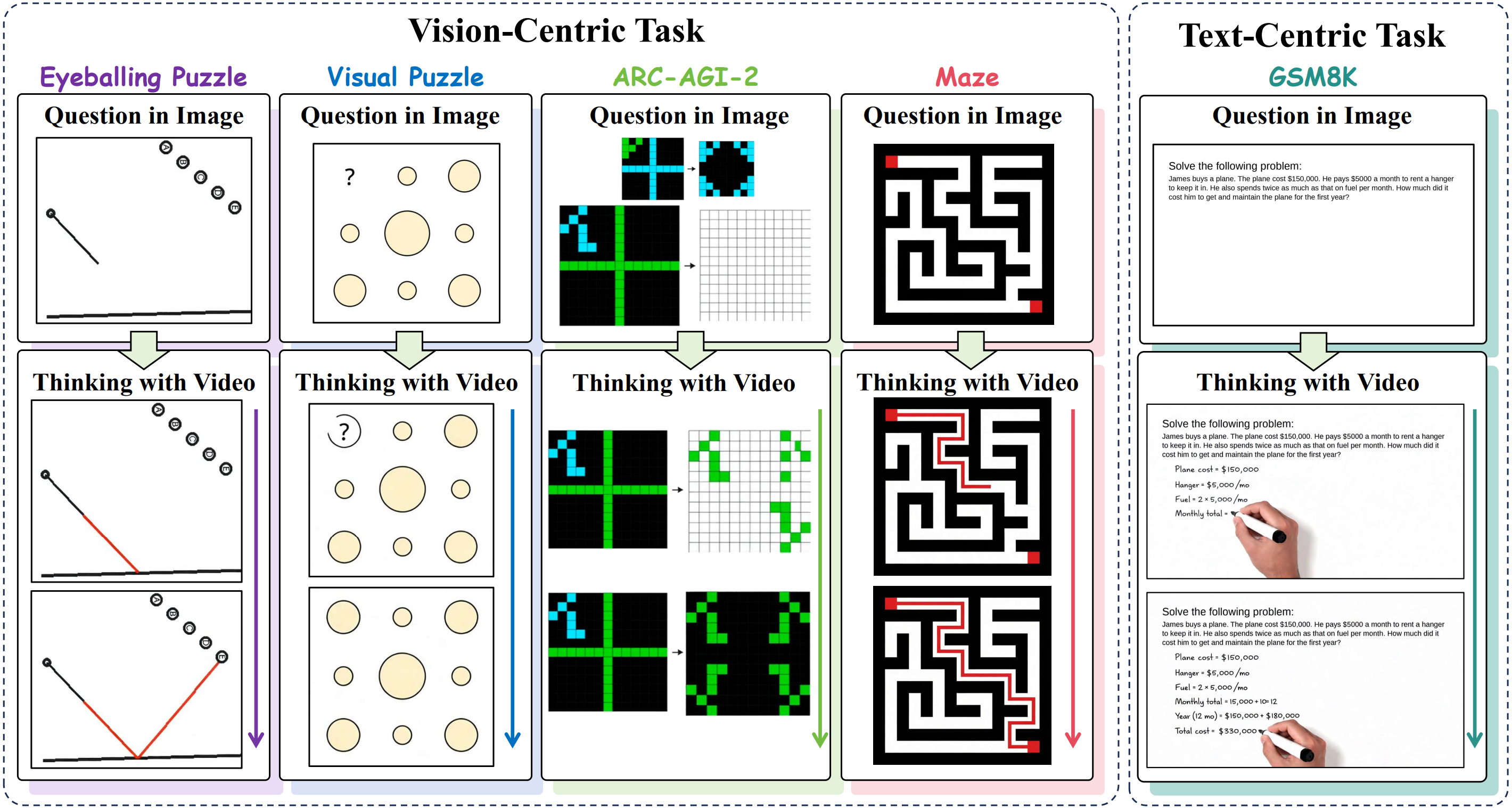

The "Thinking with Text" and "Thinking with Images" paradigms significantly improve the reasoning abilities of large language models (LLMs) and Vision-Language Models (VLMs). However, these paradigms have inherent limitations. (1) Images capture only single moments and fail to represent dynamic processes or continuous changes, and (2) the separation of text and vision as distinct modalities, which hinders unified multimodal understanding and generation. Therefore, we propose "Thinking with Video", a new paradigm that leverages video generation models such as Sora-2 to use video frames as a unified medium for multimodal reasoning. To support this exploration, we developed the Video Thinking Benchmark (VideoThinkBench), which covers both vision-centric tasks (e.g., Eyeballing Puzzles) and text-centric tasks (e.g., GSM8K and MMMU).

Our evaluation on VideoThinkBench establishes Sora-2 as a capable reasoner. On vision-centric tasks, Sora-2 is comparable to state-of-the-art (SOTA) VLMs, and even surpasses GPT-5 by 10% on eyeballing puzzles. On text-centric tasks, Sora-2 achieves 92% accuracy on MATH, and 69.2% accuracy on MMMU. Furthermore, we systematically analyze the source of these abilities. We also find that self-consistency and in-context learning can improve Sora-2's performance.

In summary, our findings show that the video generation model is the potential unified multimodal understanding and generation model, positioning "Thinking with Video" as a potential unified multimodal reasoning paradigm.

Leaderboard on VideoThinkBench (minitest)

Vision-Centric Tasks

Video Generation Models 🎞️

Image Generation Models 🖼️

Vision-Language Models 📃

Note:

"Eyeballing Point/Line/Shape" refer to Point Tasks, Line Tasks and Shape Tasks in Eyeballing Puzzles. The results of video generation models are Major Frame evaluation results.

"Visual Symmetry/Gradient/Compositionality" refer to the Symmetry Tasks, Gradient Tasks and Compositionality Tasks in Visual Puzzles.

"Maze Square/Hexagon/Circle" refer to Square Maze, Hexagon Maze and Circle Maze in Maze Tasks.